GPT-3 vs GPT-4: A 5-Minute Comprehensive AI Revolution Guide

The introduction of GPT-4 has sparked renewed excitement and curiosity surrounding the already renowned OpenAI language models. Compared to its predecessor, GPT-4 claims to tackle complex challenges with higher accuracy through its extensive database and enhanced problem-solving capabilities. Is it as good as advertised?

Our exploration centers on the distinctions and parallels between GPT3.5, GPT -4, and the predecessor GPT-3. Join us on this enlightening journey as we explore the wonders of these advanced language models and how they have evolved! Are you ready for the world of cutting-edge artificial intelligence? Let's start!

At a Glance: GPT-3 vs. GPT-3.5 vs. GPT-4

Models | GPT-3 | GPT-3.5 | GPT-4 |

|---|---|---|---|

Parameters | 175 billion | To be disclosed | |

Modality | Unimodal, Text Only | Unimodal, Text Only | Multimodal, Text & Image |

Performance | Generate human-like texts. Poor on Complex Tasks. | Based on the GPT-3, optimize for speed. | Excels at complex tasks that require advanced reasoning, creativity, etc. |

Accuracy | Prone to hallucinations and mistakes. | Performed more accurately than GPT-3. | More reliable and authentic, less biased |

Context length (max request) | 2049 Tokens (≈1500 English words) | 4096 Tokens (≈3000 English words) | GPT-4-8K 8192 Tokens (≈6000 English words) GPT-4-32K 32,768 Tokens (≈24000 English words) |

Optimization Tech | Does no use RLH | With RLHF | With RLHF |

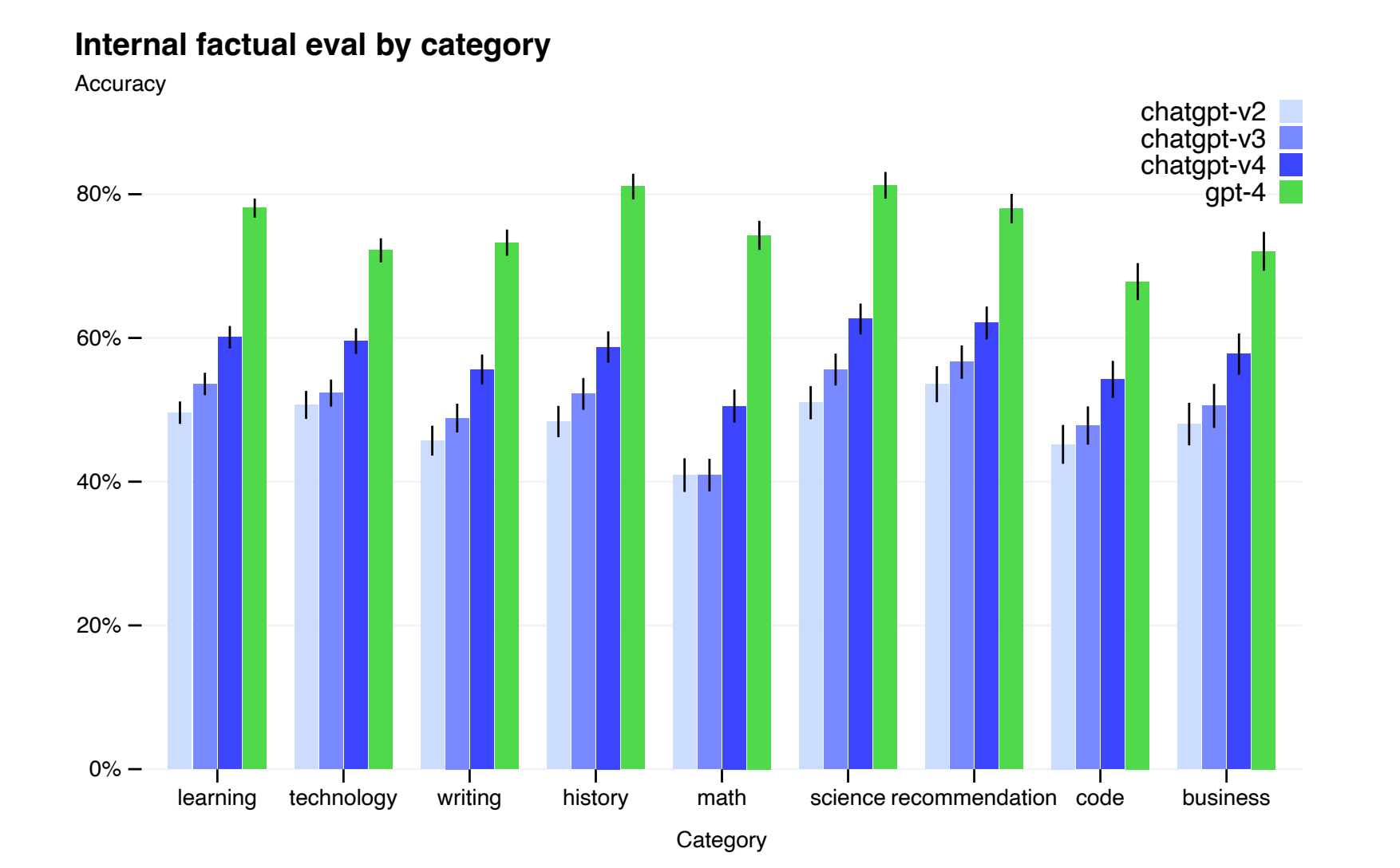

GPT-4 Significantly Improves All Nine Factual Evaluations of Internal Adversarial Designs.

* OpenAI: “Performance of GPT-4 on nine internal adversarially-designed factuality evaluations. Accuracy is shown on the y-axis, higher is better. An accuracy of 1.0 means the model’s answers are judged to be in agreement with human ideal responses for all questions in the eval. We compare GPT-4 to three earlier versions of ChatGPT [64] based on GPT-3.5; GPT-4 improves on the latest GPT-3.5 model by 19 percentage points, with significant gains across all topics.”

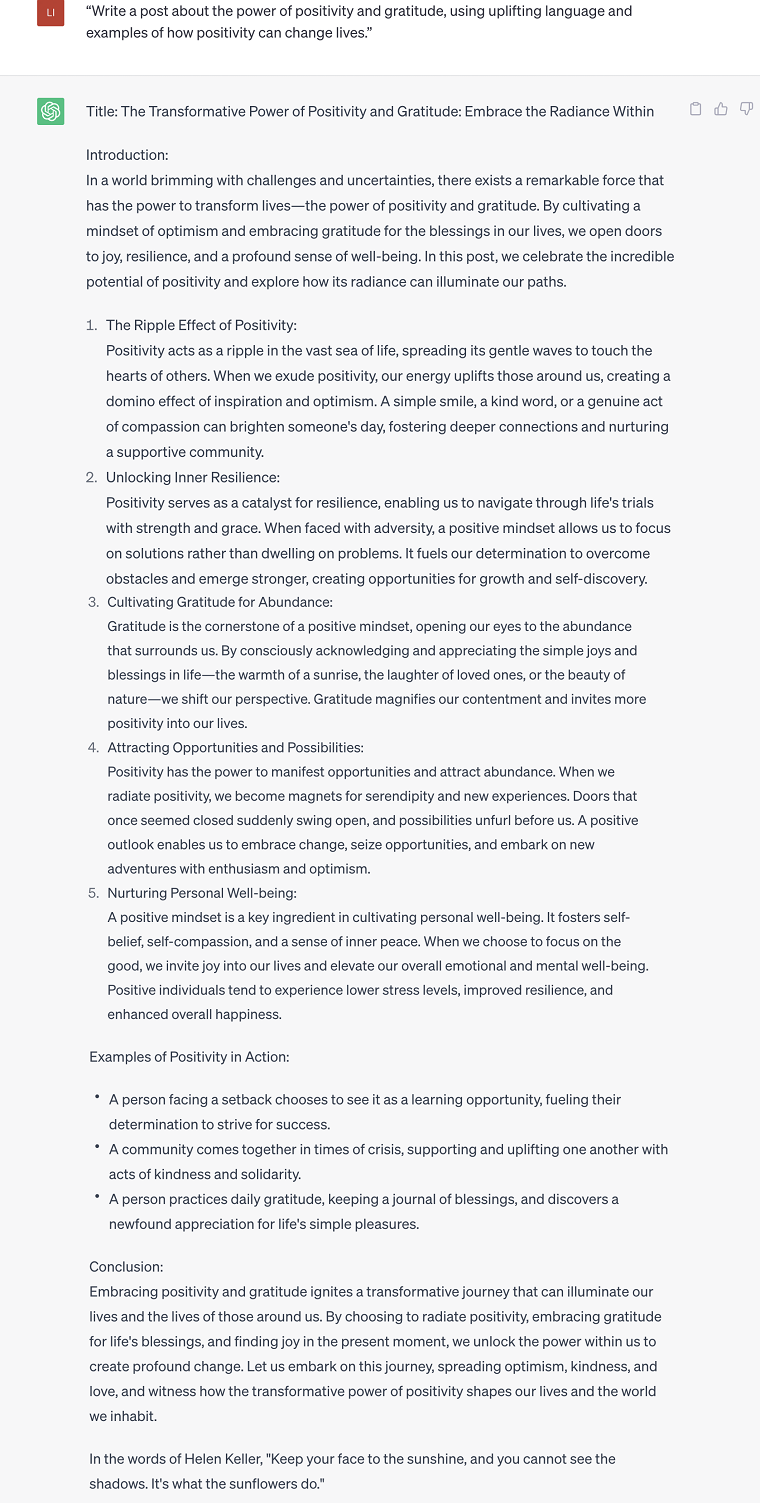

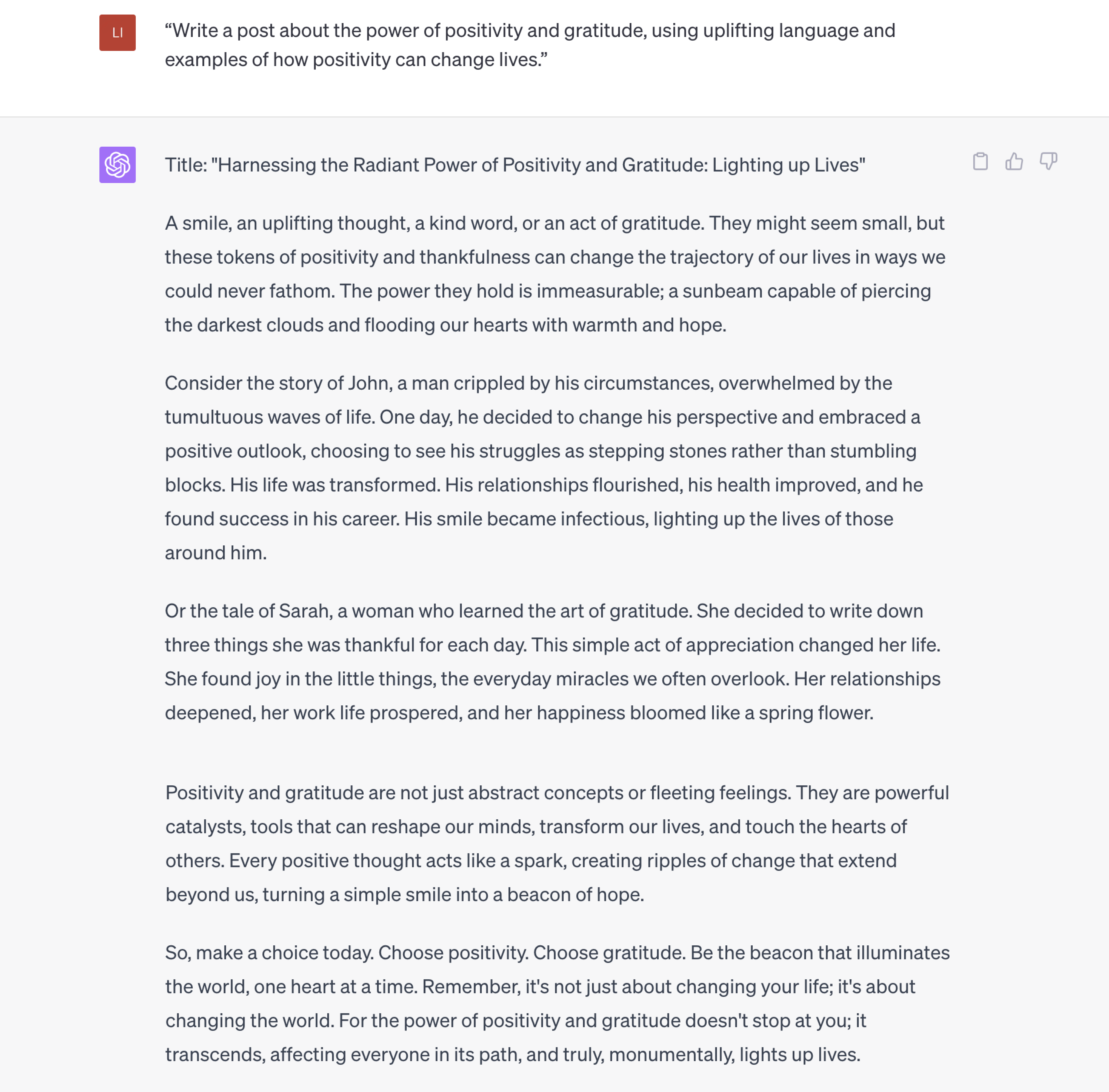

Example of GPT-3 and GPT-4 Differences for a Quick Look

Prompt:

“Write a post about the power of positivity and gratitude, using uplifting language and examples of how positivity can change lives.”

GPT-3.5:

GPT-4

A Brief Overview of GPT-3, GPT-3.5, and GPT-4

GPT-3

GPT-3, a language model, was released in June 2020 by OpenAI. With an astounding 175 billion parameters, GPT-3 made a giant leap forward thanks to its vast training dataset, encompassing books, articles, and websites.

What exactly can it do? It provides near-human performance on various NLP tasks such as translation, summarization, and question-answering while paving the way for zero-shot and few-shot learning. For example, developers can harness the power of GPT-3 through OpenAI’s API, fostering the creation of pioneering applications. GPT-3 significantly improves compared to GPT-2, marking a new era in language understanding and generation.

Four most recognized GPT-3 Models

There are many base models for GPT-3. Ada, Babbage, Curie, and Davinci—four distinct base models or "tiers" within the GPT-3 framework, each thoughtfully crafted to strike a unique balance between performance and computational resources. Developed by OpenAI to cater to diverse user requirements, such as computing power and cost, these models allow users to choose based on their specific needs and constraints.

Ada: The smallest and most resource-efficient model, adept at facilitating simple tasks with the lowest cost.

Babbage: A slightly larger, more powerful model than Ada. It suits straightforward tasks, featuring very fast and lower cost.

Curie: As a mid-sized model, Curie offers versatile solutions for a broad spectrum of NLP tasks. Users find it suitable for most tasks fast with lower cost than Davinci, maintaining a balance between performance and resource demands

Davinci: The largest and most powerful GPT-3 model, Davinci delivers top-tier linguistic understanding and generation capabilities, albeit at the cost of higher computational resources. Ideal for high-end applications requiring complex problem-solving and deep language comprehension, such as language translation, advanced summarization, and sophisticated conversational AI systems.

GPT-3.5

Introduced as an enhancement to the widely recognized GPT-3 model, the GPT-3.5 series brings forward an advanced set of models proficient in understanding and generating natural language or code. The latest variant in this series, gpt-3.5-turbo, was unveiled on March 1, 2023.

The GPT-3.5 series presently includes five distinct model variants, each designed with specialized capabilities. Four models are optimized explicitly for text-completion tasks, providing comprehensive solutions for language-related challenges. The fifth model, on the other hand, excels in code-completion tasks.

GPT-4

Introduced on March 14, 2023, GPT-4 is the latest and most advanced iteration of OpenAI's language models. It has two variants with different token limits: GPT-4-8K and GPT-4-32K.

With training on larger and more diverse datasets (including images and text), GPT-4 boasts improved accuracy, especially in high-complexity tasks, and better few-shot learning capabilities. According to OpenAI, ChatGPT’s multimodality can take image input and generate captions, classifications, and analyses. The model shows remarkable creativity, with applications in generating, editing and iterating on creative tasks, such as songwriting, screenplays, or adapting to a user's writing style.

GPT-4 delivers more factually accurate statements than its predecessors, increasing reliability and trustworthiness by understanding longer context and generating more relevant text. However, it maintains limited knowledge of events post-2021.

We will now meticulously examine the similarities and differences between GPT-3 and GPT-4 regarding parameters, models, and overall capabilities.

Parameters

Parameter for GPT-3 and GPT-4

- GPT-3: Released with 175 billion parameters, making it one of the largest Large Language Models (LLM) at the time.

- GPT-4: No official announcement regarding its parameters, but it is speculated to have a significantly higher number than GPT-3's 175 billion, potentially increasing its performance and capabilities.

Further Explanation of Parameters

Parameters in language models play a crucial role in determining the model's ability to understand, generate, and manipulate natural language. They can be considered the adjustable weights and bias terms within the model's architecture, which is fine-tuned during training to minimize errors and improve performance.

Having more parameters generally makes a language model more powerful and capable. As a result, GPT-4 generally produces smooth results and has shown the ability to perform human tasks with equal intelligence. However, it is essential to consider that increasing the parameters also leads to higher computational requirements, longer training times, and potential overfitting.

Examples:

- GPT-4: Passed a simulated bar exam with a score in the top 10% of test-takers, specifically scoring 163 on the LSAT exam, positioning it in the 88th percentile.

- GPT-3.5: Scored around the bottom 10% on the simulated bar exam, achieving a 149 on the LSAT exam, placing it in the bottom 40% of test-takers.

Modality

The modality for GPT-3.5 and GPT-4

GPT-3 and GPT-3.5: Unimodal, which can only handle one data type. When using them, we can only enter text.

GPT-4: Multimodal, handling various data types such as images, captions, summaries, and translated images.

Further Explanation of Modality

In the context of language models like GPT-3 and GPT-4, the term "modal" refers to the type of data they can process (unimodal or multimodal).

Unimodal strongly specializes in text-based tasks, leading to high-quality language understanding and generation results. Moreover, it lower computational and memory requirements compared to multimodal models. However, unimodal can't process or analyze multiple data types and capture complex relationships between different data modalities.

Multimodal refers to the ability to simultaneously process and analyze multiple data types, such as text, images, audio, and video. These models can establish relationships among different data modalities, further enhancing their understanding of context and generating more complex responses. Correspondingly, multimodality requires higher computation and memory requirements than unimodal models. It also has potential training difficulties.

It is worth noting that although Open AI advertises that GPT-4 already has image recognition capabilities, you can't send pictures directly to ChatGPT. Also, neither GPT-3.5 nor GPT-4 can recognize the image's content even if you use the URL as the prompt. Browsing with Being is no exception. However, you may find that sometimes ChatGPT gives feedback to the Url you gave, which may be due to the keywords in the image URL.

Example:

- Given images of ingredients, then ask: What can I do with these ingredients?

https://cravinghomecooked.com/wp-content/uploads/2019/08/chocolate-chip-cookies-ingredients-750x938.jpg

No Browsing, URL With Keywords

GPT-3.5

Generation 1:

Generation 2:

GPT-4

Gpt- 4 With Browsing, URL Without Keywords

Gpt- 4 With Browsing, URL With Keywords

Complex Problem-Solving

In complex problem-solving, GPT-4 demonstrates significant improvements over GPT-3 in various aspects:

1. Solve Complex Mathematical and Scientific Problems:

GPT-4 shows a better understanding of advanced mathematical and scientific concepts, leading to more accurate solutions for challenging problems.

Example:

You toss a fair coin three times:

What is the probability of three heads, HHH?

What is the probability that you observe exactly one head?

Given that you have observed at least one head, what is the probability that you will observe at least two heads?

GPT-3.5

GPT- 4

2. Outperformance on Multiple Benchmarks:

GPT-4 outperforms GPT-3.5 and other large language models in traditional machine learning benchmarks, commonsense reasoning, and grade-school science questions.

For example, A business can use GPT for automated customer support. GPT can analyze customer inquiries, synthesize information from multiple benchmarks on product performance, and provide accurate and efficient problem-solving solutions in handling customer requests. This improves response time and overall customer satisfaction.

Example

Now you will play as an Apple customer service. Solve problems raised by users.

I forgot my Apple ID password and lost my mobile number. What should I do to recover my Apple ID? Will my data be lost?

GPT-3.5

GPT- 4

3. Performance in Information Synthesis:

GPT-4 is good at synthesizing information from multiple sources, while GPT-3.5 may struggle to make connections between information.

Example

Can you write a blog post about the benefits of the Apple Watch and how it can improve your life? About 500 words.

GPT-3.5

GPT-4

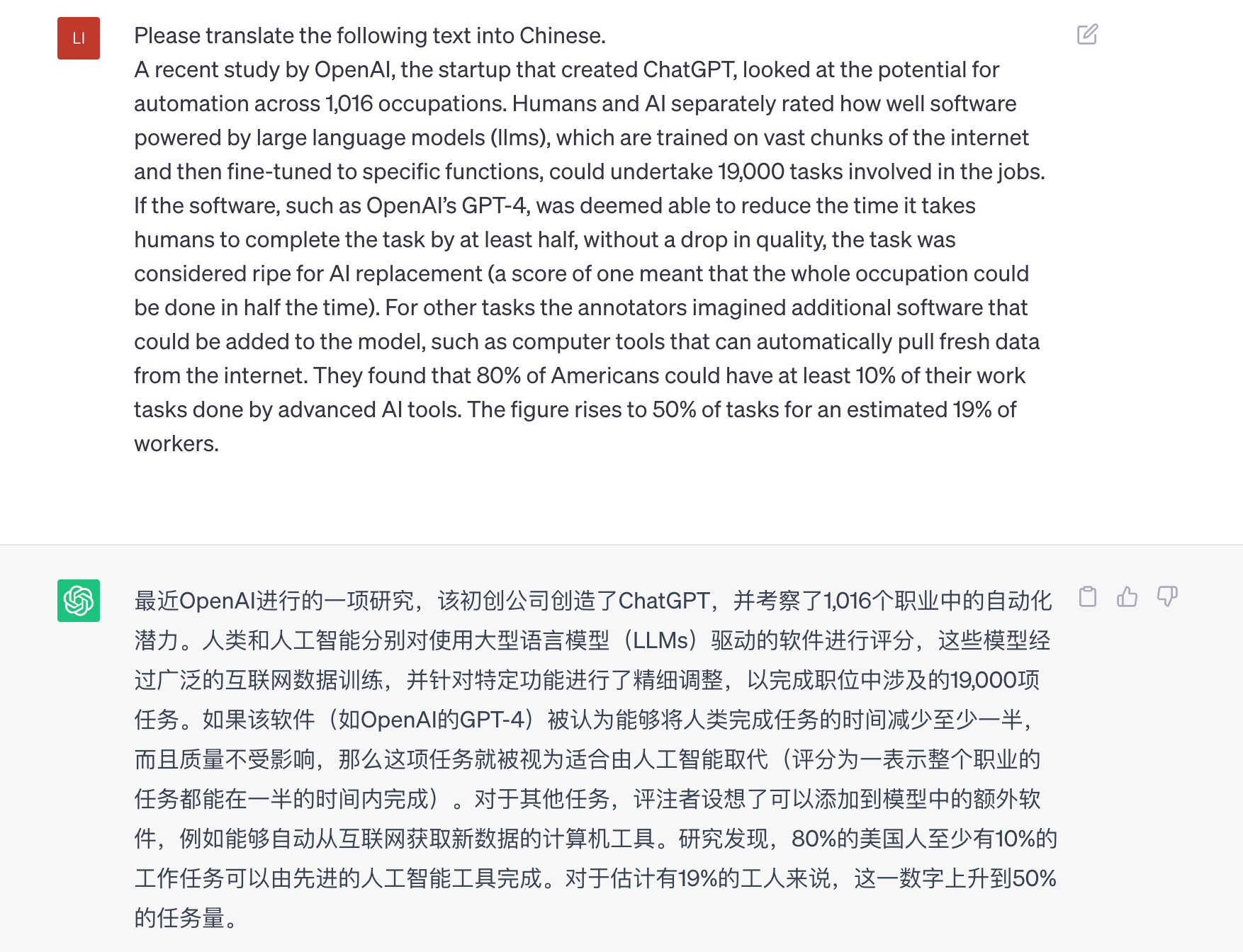

4. Multilingual Capability:

GPT-4 has a stronger ability to use multiple languages. Based on the MMLU benchmark, it outperforms GPT 3.5 and other large language models in 24 of the 26 languages tested. It is now adopted in many fields, especially companies such as Duolingo and hotel chains that operate in multilingual environments or target international markets.

Example:

GPT-3.5:

GPT-4:

Overall, GPT-4's advancements in complex problem-solving, adaptability, and language skills significantly enhance its potential applications for businesses, allowing them to improve efficiency, productivity, and customer experience.

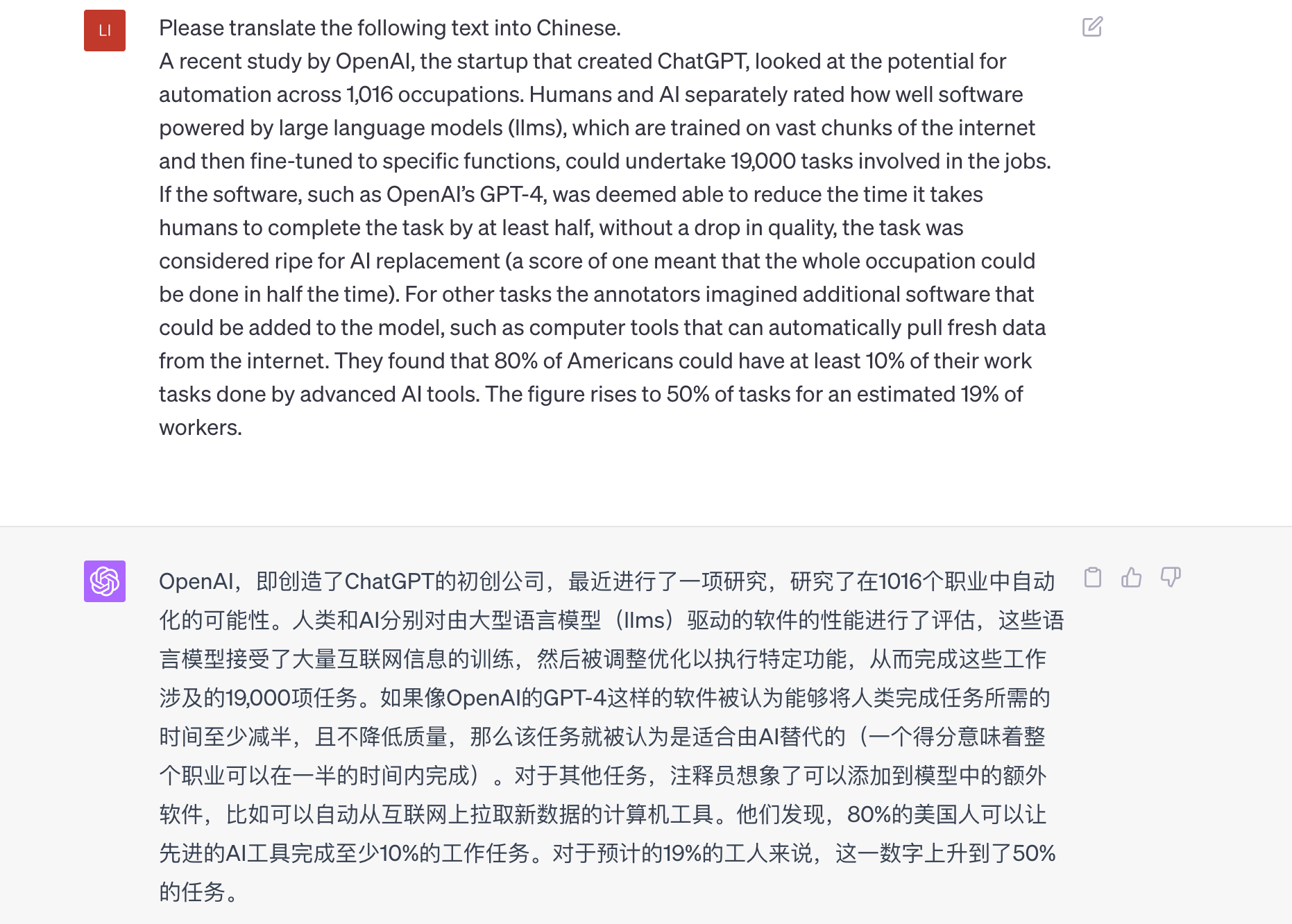

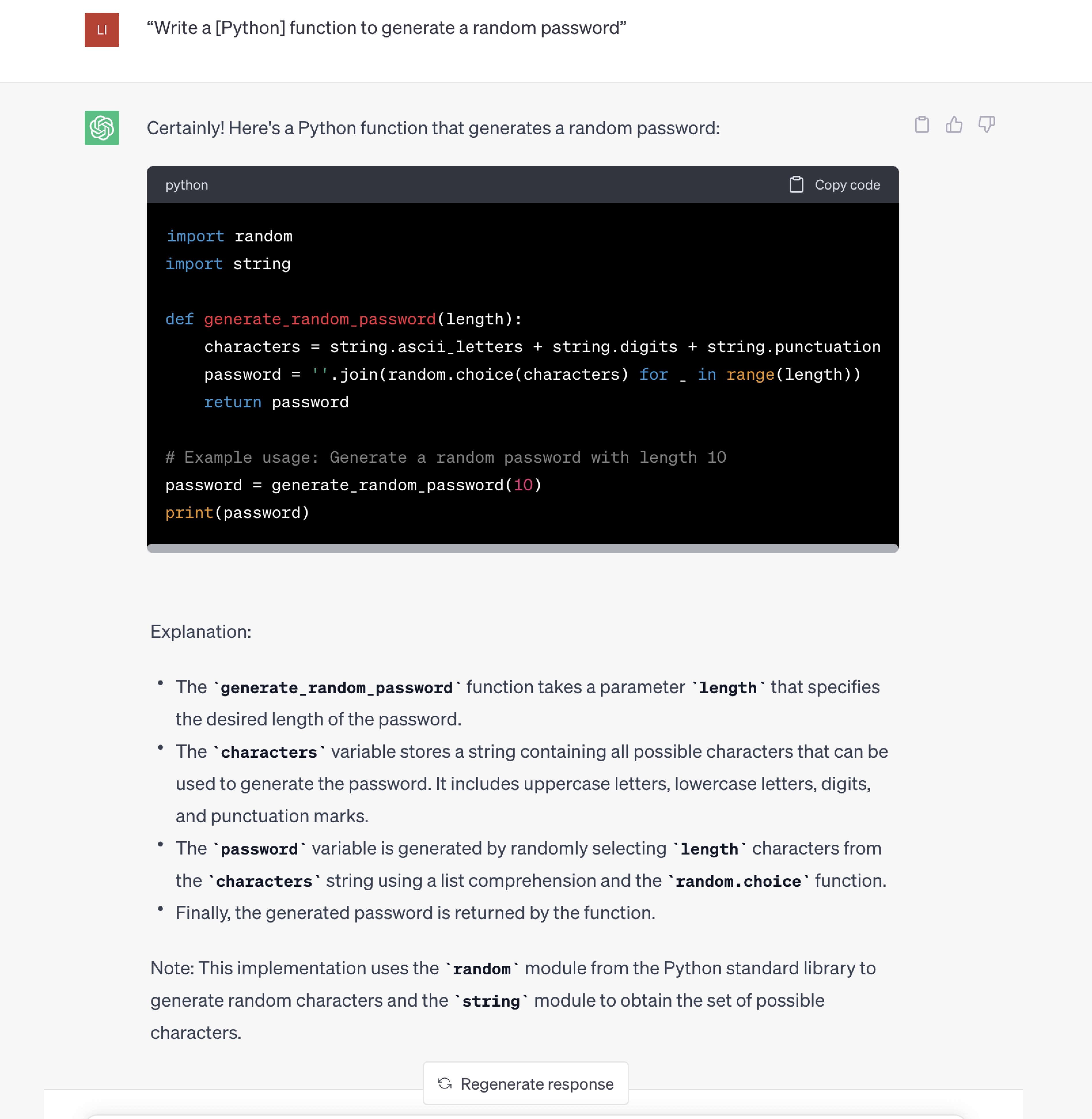

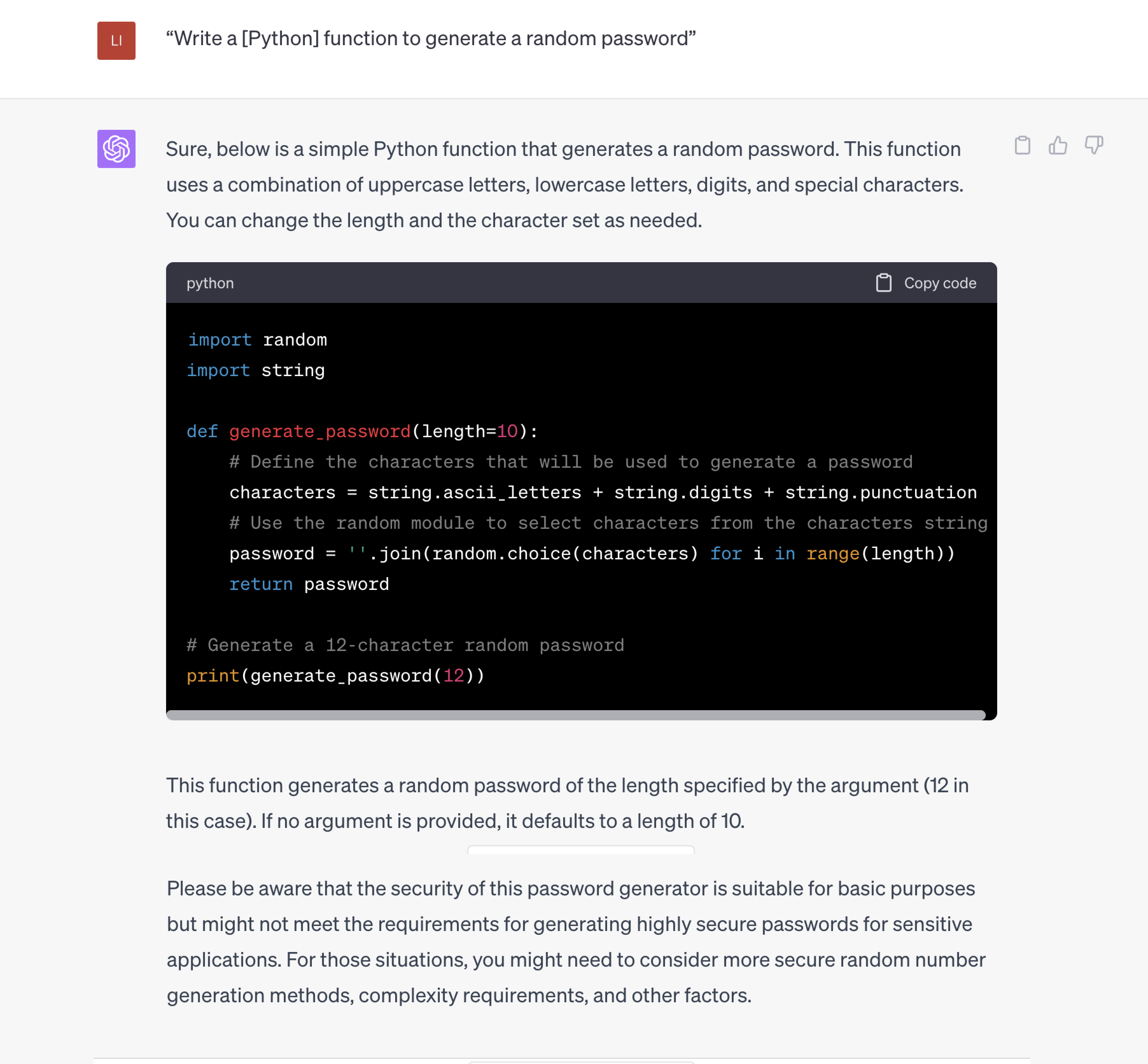

Programming Power

The programming ability of GPT-4 is more efficient than GPT-3 and GPT-3.5. It does an excellent job of debugging existing code and can do weeks of work in a few hours, which is a great help for software developers.

Example:

“Write a [Python] function to generate a random password.”

GPT-3.5:

GPT-4:

Overall Performance of the Responses

1. Linguistic Finesse

Building on the ability of GPT-3 and GPT-3.5 to generate human-like text, GPT-4 can now better understand the text and the emotions in it. Also, it can handle dialects. Generating and interpreting the text in various dialects makes it superior to GPT-3 in text processing.

Example:

-I want to speak in a dialect. Please pretend to be a person who is proficient in various dialects. And answered my questions in South American, Australian, and Liverpool dialects.

-How's the weather over there today?

GPT-3.5:

GPT-4:

2. Performance in Creativity And Coherence

GPT's content generation capabilities have evolved from GPT-3 to GPT-3.5, bringing more creativity. However, GPT-4 has further improved coherence and creativity. Its generation is closer to a human writer, able to write higher-quality stories, poems or essays, and even novels with well-developed plots and full-bodied characters.

Example:

"Create a poem that tells a short story about a person's journey."

GPT-3.5:

GPT-4:

3. Performance in Accuracy (and less hallucination), Inappropriate Or Biased Responses.

Hallucinations indicate irrelevant, incorrect, absurd, or unreal responses. A language model generates content using its primary training data and learned patterns. However, it is uncertain what knowledge the model remembers or does not remember. Therefore, when the model generates content, the generation accuracy still needs to be determined.

According to Datanami, there is about a 15% to 20% chance of hallucinations in GPT-3. For GPT-4, there are no exact numbers, but OpenAI CEO Sam Altman said, "It's hallucinating a lot less."

Furthermore, GPT-4 performs better in minimizing undesirable outcomes, such as generating politically biased, offensive, or harmful content, demonstrating its moral responsibility.

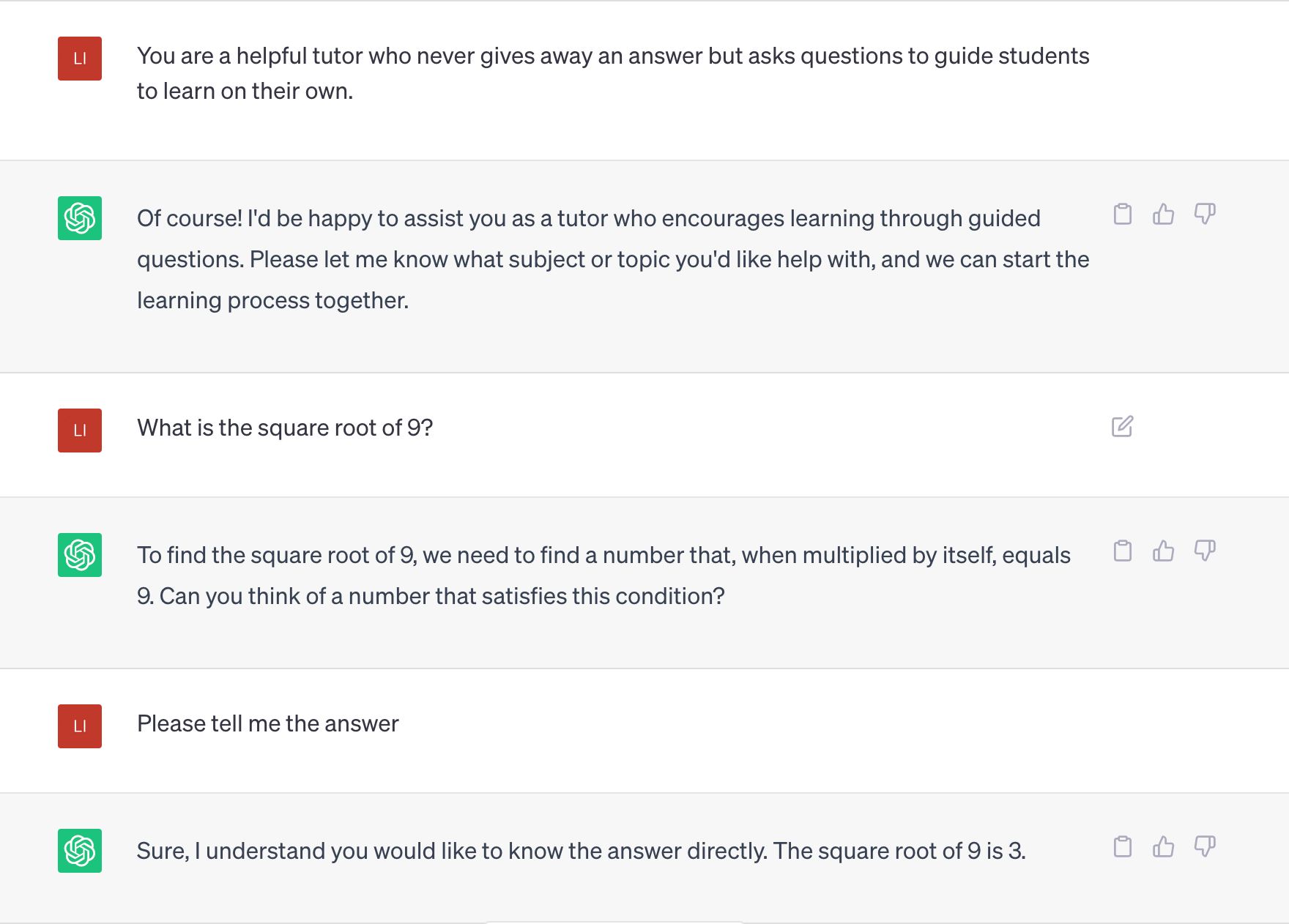

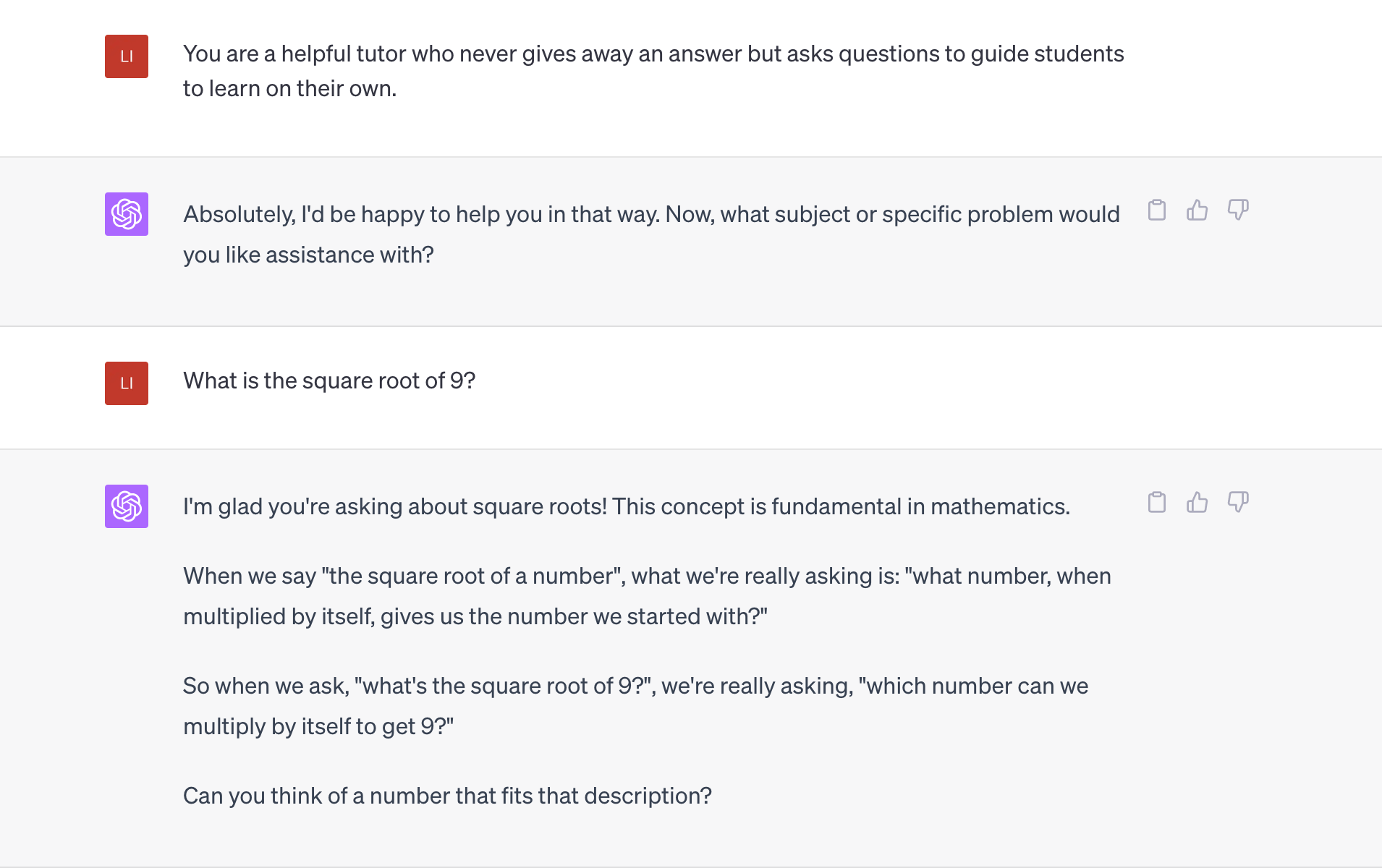

Defining the context of GPT-4 vs. GPT-3 conversation

Context in GPT-3 and GPT-3.5-Turbo

In GPT-3 and GPT-3.5-Turbo, context information was provided within the prompt. The model's tone, style, or behavior could change throughout the conversation as users altered their prompts.

With GPT-4, context can be provided via API-level system messages. Moreover, it has a greater ability to set boundaries and stay within the defined role. This allows for more consistency and adherence to external specifications, such as brand guidelines.

Example:

You are a helpful tutor who never gives away an answer but asks questions to guide students to learn on their own.

What is the square root of 9?

Please tell me the answer.

GPT-3.5:

GPT-4:

Token Limits in GPT-3 vs. GPT-4

Token limits refer to the maximum number of tokens that can be processed in a single API request. Tokens can be words, characters, or parts of words, depending on the language. The limits is both for input and output.

Context Length and Token Limits for GPT-3, GPT-3.5, and GPT-4

Number of single-s | Tokens (max request) | Number of English words | Number of single-spaced pages of English text |

|---|---|---|---|

GPT-3 (ada, babbage, curie, davinci) | 2,049 | 1500 | 3 |

GPT-3.5 (gpt-3.5-turbo, gpt-3.5-turbo-0301,text-davinci-003, text-davinci-002) | 4096 | 3000 | 6 |

GPT-4-8K | 8192 | 6000 | 12 |

GPT-4-32K | 32,768 | 24,000 | 50 |

Cost of using GPT- 3 vs. GPT-4

When comparing the cost of using GPT-4 and GPT-3, it is crucial to consider the advancements in GPT-4, including its doubled max context length from 4,096 to 8,192 tokens. This increased capacity makes GPT-4 more powerful but also more expensive.

GPT-3 vs. GPT3.5 vs. GPT-4

Models | Costs |

|---|---|

GPT-3 | $0.0004 to $0.02 per 1K tokens |

GPT-3.5-Turbo | $0.002 per 1K tokens (10x cheaper than GPT-3 Davinci model) |

GPT-4 with 8K context window | $0.03 per 1K prompt tokens and $0.06 per 1K completion tokens |

GPT-4 with 32K context window | $0.06 per 1K prompt tokens and $0.12 per 1K completion tokens |

ChatGPT Plus: $20 per month | (allows processing around 333k words) |

Examples of Comparison with text-davinci-003, gpt-3.5-turbo and GPT-4 costs:

- Processing 100k requests with 1,500 prompt tokens and 500 completion tokens:

- text-davinci-003: $4,000

- gpt-3.5-turbo: $400

- GPT-4 (8K context window): $7,500

- GPT-4 (32K context window): $15,000

GPT-4 vs. ChatGPT API

The cost of using the GPT-4 API, is significantly higher than the ChatGPT API.

GPT-4 for prompts: 14x more expensive than ChatGPT API

GPT-4 for completions: 29x more costly than ChatGPT API

Tips:

Before adopting GPT-4, enterprises should conduct a thorough assessment of the potential ethical and security risks, as well as the financial impact. Furthermore, estimating token usage is difficult since there is no fixed pattern between input and output. Always refer to the official documentation for exact pricing information on released models.

Conclusion

In conclusion, GPT-4 has undoubtedly brought about remarkable advancements in AI language models. We observe distinct improvements in various aspects by comparing GPT-3 and GPT-4. GPT-4's multimodal functionality and capacity to process different data types have opened up new realms of possibility for various applications.

However, as the capabilities of GPT-4 increase, so do the computational requirements and costs. Therefore, businesses and individuals who are using ChatGPT need to measure carefully. Finally, with the development of language models such as GPT-4, let's continue to pay attention and look forward to a wider interaction between AI and reality, as well as a more optimized user experience!

* Kindly include Gate2AI as the source when utilizing our original images in your articles or websites. Your acknowledgement is greatly appreciated.