Explore in 60 Seconds: Is ChatGPT Open Source?

“Is ChatGPT open source?" This a burning question, sparking curiosity among tech enthusiasts and AI developers alike. This page unveils the intricate world behind ChatGPT's development, ownership, and accessibility. Dive in to discover the facts, challenges, and surprises nestled in the labyrinth of AI technology. Let's explore the truth together!

ChatGPT's Code is Closed Source

While OpenAI is the mastermind behind ChatGPT, it doesn't classify as open-source software. The essential coding infrastructure of ChatGPT remains inaccessible to the public. Hence, unlike authentic open-source endeavors, the expansive developer network can't readily acquire, amend, or disseminate the foundational code of ChatGPT.

OpenAI has not open-sourced any version of its GPT language models beyond GPT-2. All later iterations, including GPT-3, GPT-3.5, and GPT-4, remain proprietary technology guarded by OpenAI.

Why is OpenAI Keeping ChatGPT Code Closed?

OpenAI likely has several motivations for maintaining such tight control over the core GPT code:

Competitive Advantage

The GPT models represent OpenAI's primary technological advantage in the market. Releasing the code openly would allow rivals to copy their approach.

Economics

OpenAI has invested immense sums into developing its AI capabilities. Open sourcing would forfeit potential value capture from these investments.

Safety

Keeping GPT proprietary enables OpenAI to control technology use and limits unintended consequences. Unfettered open-source access introduces risks.

Commercialization

As a for-profit company, OpenAI can monetize access to GPT through proprietary API services. An open-source model would pose challenges to profiting from the technology.

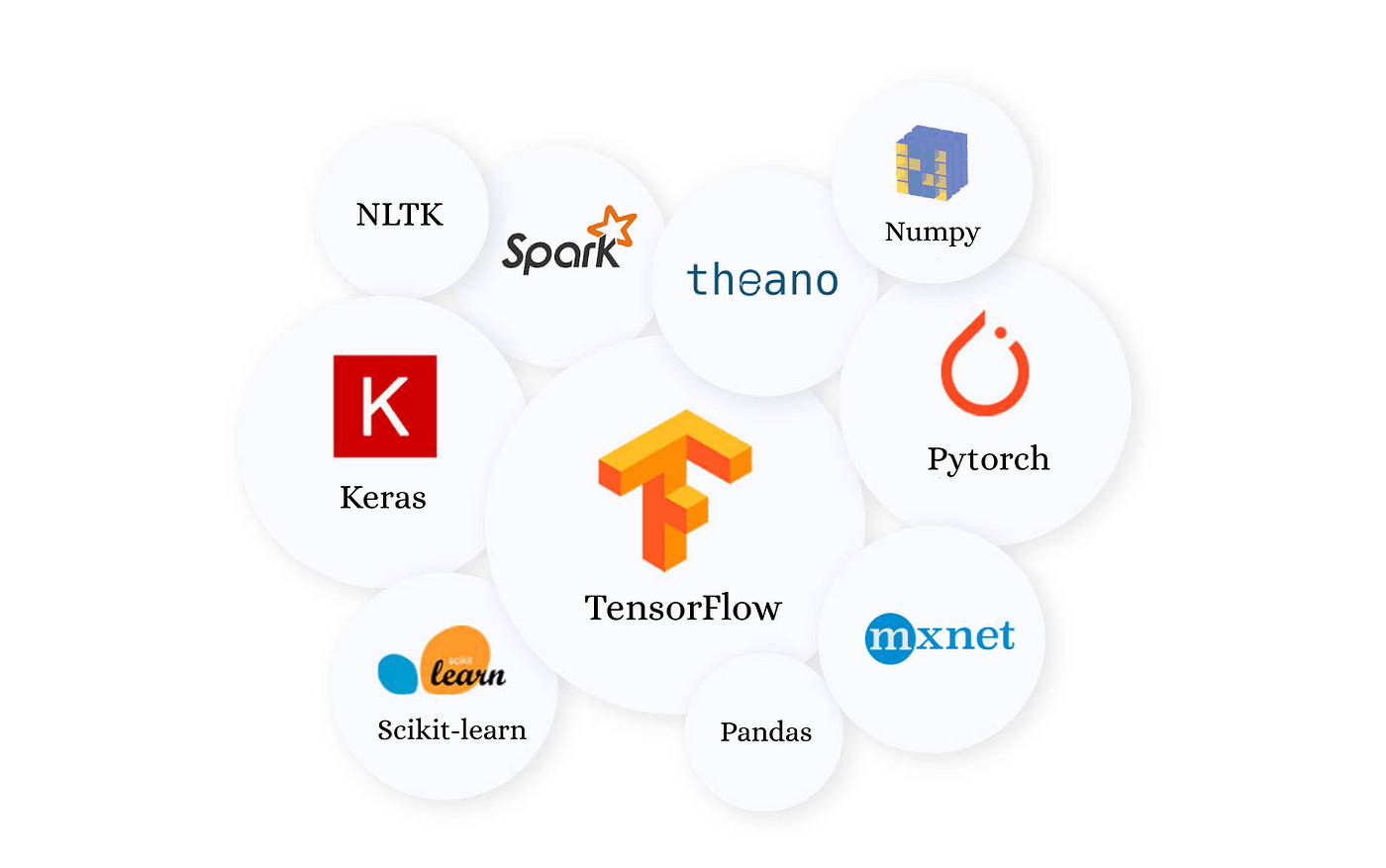

ChatGPT Relies Heavily on Open-Source Components

Although ChatGPT's core code remains closed, its creation was enabled by incorporating and building upon many critical open-source technologies:

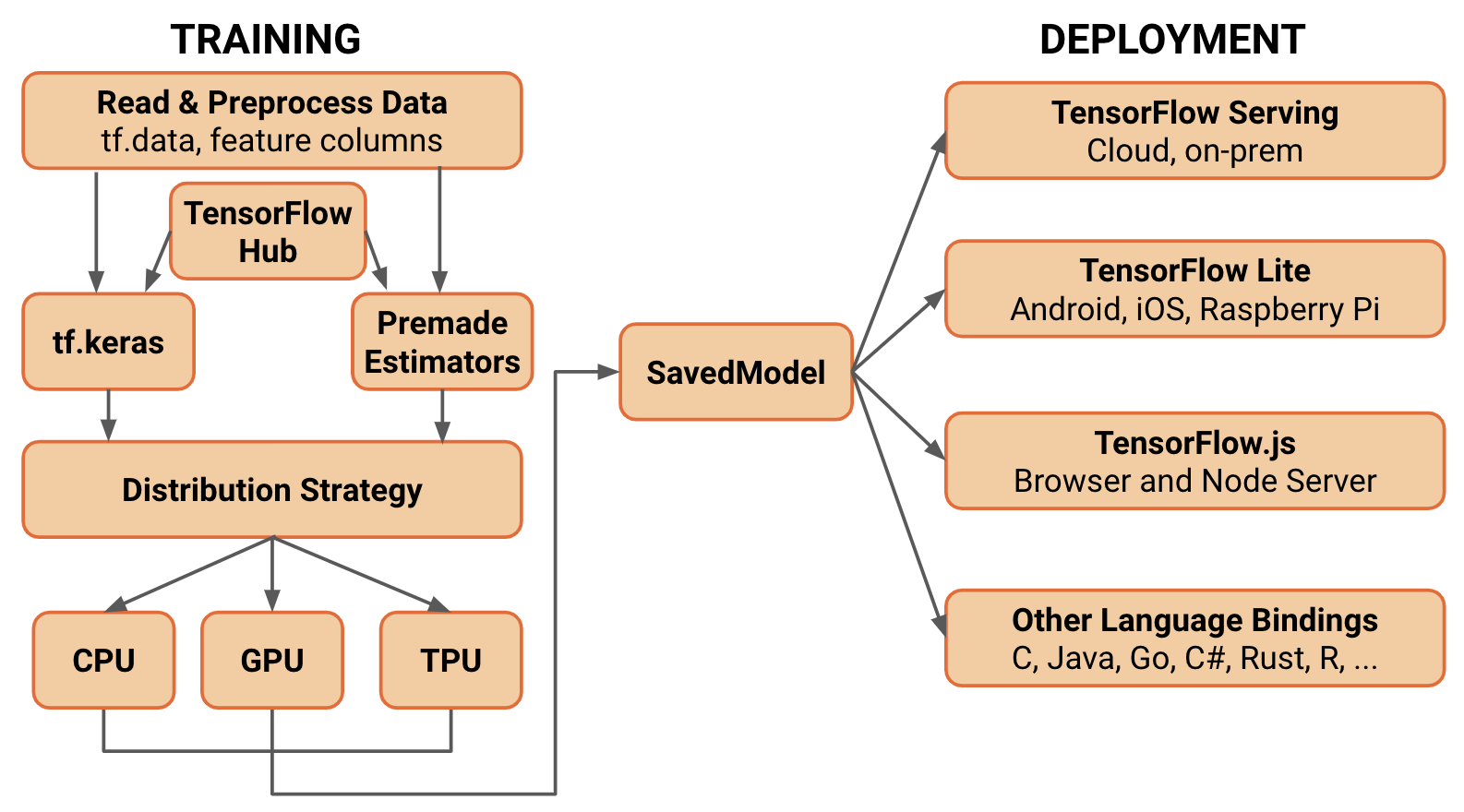

TensorFlow

This open-source library from Google was critical for implementing the neural network architectures used in GPT models.

PyTorch

Facebook's open-source PyTorch framework provided fundamental support for optimizing and training the AI models.

Transformers

Hugging Face's open-source Transformers code library gave ChatGPT essential natural language processing capabilities.

Container Technologies

Open source container platforms like Docker enabled deploying GPT models to the cloud.

Operating Systems

Linux and other open-source operating systems were foundational building blocks for OpenAI's computing infrastructure.

Open-source technologies form the bedrock of advanced innovation in the AI realm. Upon this open foundation, closed-source code is constructed to craft ChatGPT specifically. Such a dynamic interplay, oscillating between open and closed sources, is a characteristic feature in intricate software ecosystems.

Key Benefits of Incorporating Open Source

Leveraging open-source components provided OpenAI with several significant benefits:

Speed

The adoption of tried-and-true open code expedited the developmental process. For instance, implementing pre-built Python libraries helped speed up prototyping.

Economical

The employment of free, open-source libraries considerably curtailed software licensing expenses.

Adaptability

Open source offers more latitude to use, adapt, and expand software to satisfy specific requirements, like customizing machine learning algorithms for unique tasks.

Deep Learning Frameworks

TensorFlow, PyTorch, and other open-source frameworks provided the foundation for designing, training and deploying the neural networks behind GPT models.

Support Libraries

Open sources Python libraries like NumPy, SciPy, and scikit-learn were critical building blocks for implementing machine learning capabilities.

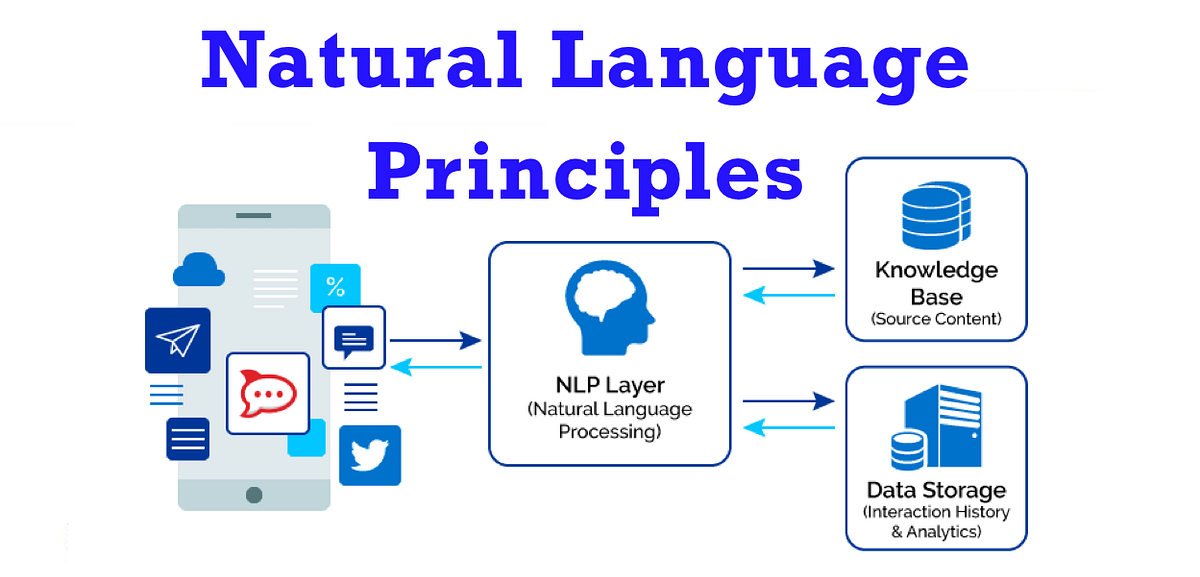

Natural Language Processing

ChatGPT leveraged open-source NLP libraries such as Hugging Face Transformers, NLTK, and spaCy to understand and generate language.

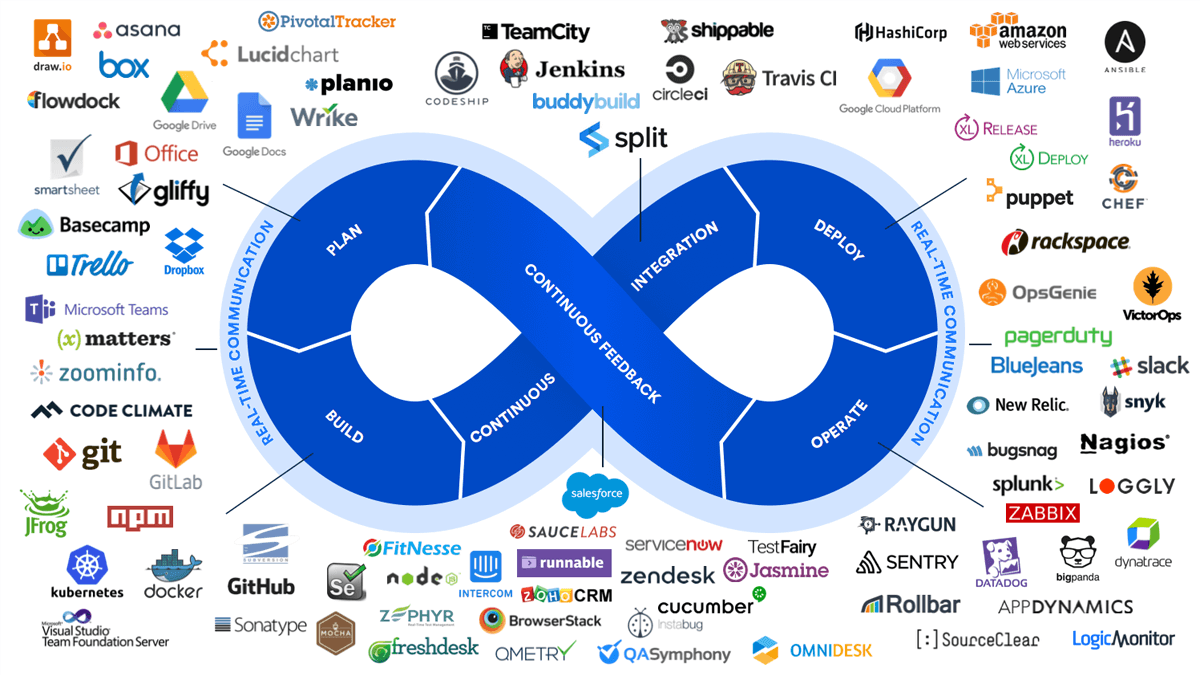

Infrastructure

Using open-source cloud technologies was critical in facilitating the scaling of massive computational requirements needed for training extensive models. Systems like Kubernetes and other open-source tools were indispensable in this undertaking.

Distributed Training

Open-source packages like Horovod and PyTorch distributed helped parallelize training across hundreds of GPUs/TPUs simultaneously.

Monitoring

Open source tools like TensorBoard, Comet ML, and Weights & Biases enabled monitoring of training progress and model performance.

Optimization

Open-source implementations of techniques like model pruning and quantization optimized inference cost and performance.

Accessibility

Open-source visualization tools like TensorWatch and Netron made the trained models more interpretable.

OpenAI Was Originally Intended to be Open Source

Founded in 2015, OpenAI was a non-profit focused on open AI research. Initially, both the organization's culture and technology embodied open-source ideals:

The first two versions of OpenAI's GPT language model were released as open source to enable broad collaboration.

As a non-profit, OpenAI prioritized transparency and open research publication.

However, the substantial computational costs required to develop advanced AI systems ultimately led OpenAI to shift away from open source to attract investment.

Shift to a Closed Approach

In 2019, OpenAI became a profit-driven company to gather the vast funding required for projects like GPT-3 and ChatGPT. While this change allowed for significant technological advancements, it also implied that the newer versions of GPT became closed-source.

Alternatives: Open Source Chatbot Projects

With ChatGPT's code closed off, there has been significant interest in open-source alternatives for conversational AI:

What Are the Major Open Source Language Models?

Several major language models have been open-sourced that could serve as foundations for chatbot applications:

LLaMA (Meta) :

Released in 65B, 33B, 13B, and 7B parameter sizes under a research license.

It offers performance approaching OpenAI's 175B GPT-3 in specific benchmarks.

Claude (Anthropic) :

10B and 4B parameter options tuned specifically for harmless, helpful, and honest responses.

Safer open-source conversational AI.

GPT-NeoX (EleutherAI) :

An enormous model with 20 billion parameters is made open-source, enabling free access and encouraging openness and transparency in the field.

Fine-Tuned Instructional Models

In addition, some groups have open-sourced GPT-style models fine-tuned specifically for robust chatbot capabilities:

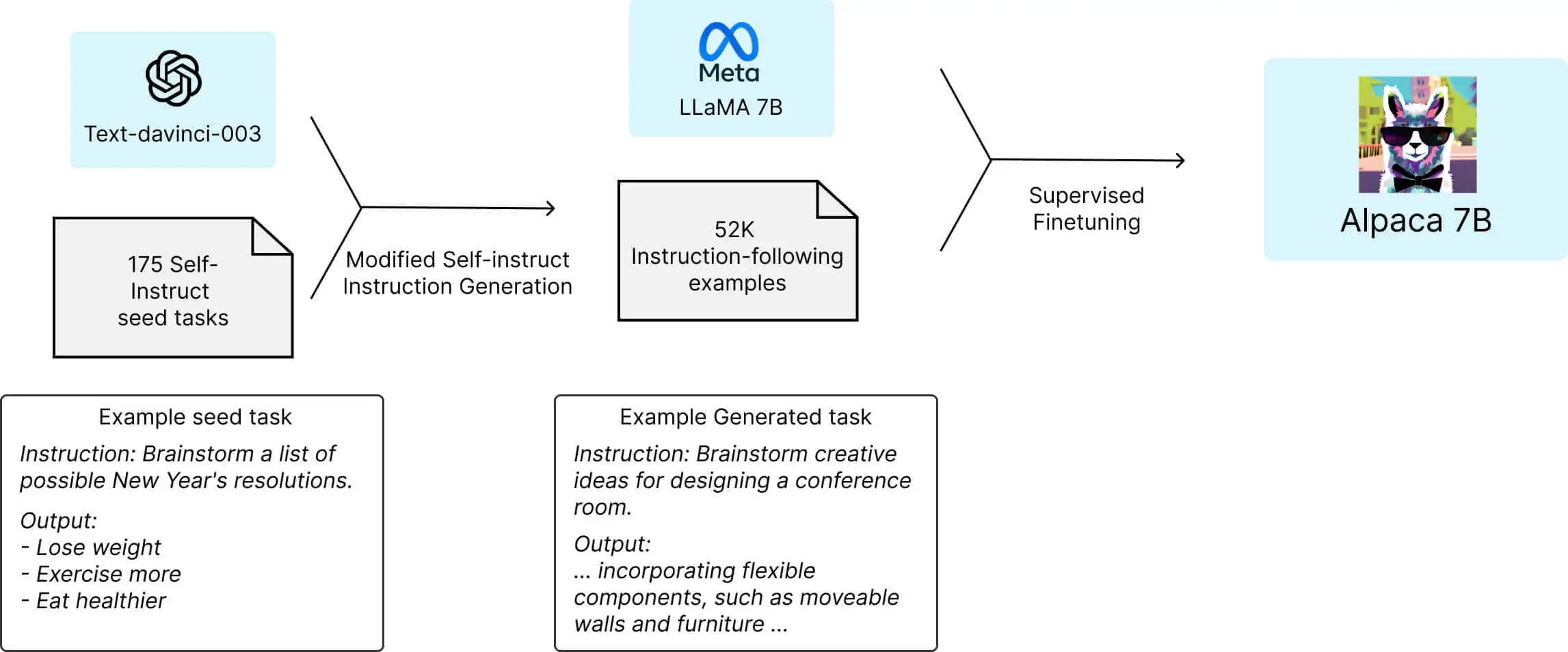

Alpaca: The Alpaca project fine-tuned the 7B LLaMA model using reinforcement learning from human feedback for chatbot-like responses.

Steward: Anthropic applied similar techniques to fine-tune Claude into a capable and safe open-source chatbot model.

Challenges of Running Open-Source Chatbots

While intriguing, running open-source conversational AI involves substantial practical challenges:

Computing Requirements - Large language models like GPT-3 require massive computing power for training and inference. Proprietary access to specialized chips gives groups like OpenAI an advantage.

Model Optimization - To run effectively on more limited hardware, open-source models need extensive optimization, such as distillation, pruning, and quantization. These add engineering complexity.

Deployment Framework - Contrary to APIs, implementing open-source models necessitates the creation of a sturdy and efficient infrastructure that can handle deployment, scaling, and availability. This translates into a substantial amount of extra effort.

Software Engineering - Properly engineering open-source models into production chatbot applications involves considerable software development beyond just ML.

Training Data - Amassing sufficient high-quality data to train open-source chatbots poses difficulties and risks without oversight.

Content Moderation - Prioritizing safety requires vigilance to filter out potential toxicity or bias, which is challenging to manage with open systems.

Profitability - Reclaiming investments can be challenging without ownership of the model. It necessitates innovative licensing strategies and the provision of services.

Adoption - Gaining user trust and adoption introduces more hurdles for open source alternatives versus recognized brands like OpenAI.

While the open community continues working to lower these barriers, running open-source chatbots currently entails significant technical and operational challenges. However, the conveniences of commercial solutions come at the cost of user control.

Alternatives of Chatbot Development

There are also rapidly growing initiatives aiming to spur open-source conversational AI systems:

OpenAssistant: This non-profit open-source organization takes a community-driven approach to building capable and beneficial chatbots.

Kollider: They are building an ecosystem for open conversational AI, providing datasets, benchmarks, and open-source model training.

Hugging Face: This influential open ML community launched the ConversationAI project to boost open chatbot research.

Future Possibilities for Open-Source AI

Nonetheless, open-source conversational AI continues to mature and close the gap with commercial solutions. Benefits of open technology include:

Customization for specific use cases since the code is accessible.

Promoting inclusivity and transparency in AI development.

With sufficient resources and responsible practices, open-source chatbots could someday surpass today's proprietary alternatives. OpenAI's ChatGPT remains the most capable option, but only partially open-source.

Is There a Free Version of ChatGPT?

Despite not being fully open source, ChatGPT (GPT-3.5) is currently available entirely free to all users. OpenAI has provided unlimited access for anyone with an internet connection and email address. There are no strict usage limits imposed.

The no-cost availability of ChatGPT during its introductory research preview phase is designed to collect valuable user insights. However, free users might need more time during high-demand periods due to limited capacity.

Over time, to offset the hefty computational expenses, OpenAI might consider monetizing ChatGPT. But now, this avant-garde AI system is accessible to everyone for exploration and experimentation.

Conclusion

While ChatGPT remains proprietary, its origins and continued progress rely heavily on open-source technologies. The path forward will involve finding the right balance between open collaboration and incentives for innovation. You can now enjoy ChatGPT's remarkable capabilities while the open-source community advances conversational AI. The interplay between open and closed sources will shape the future of this transformative technology.