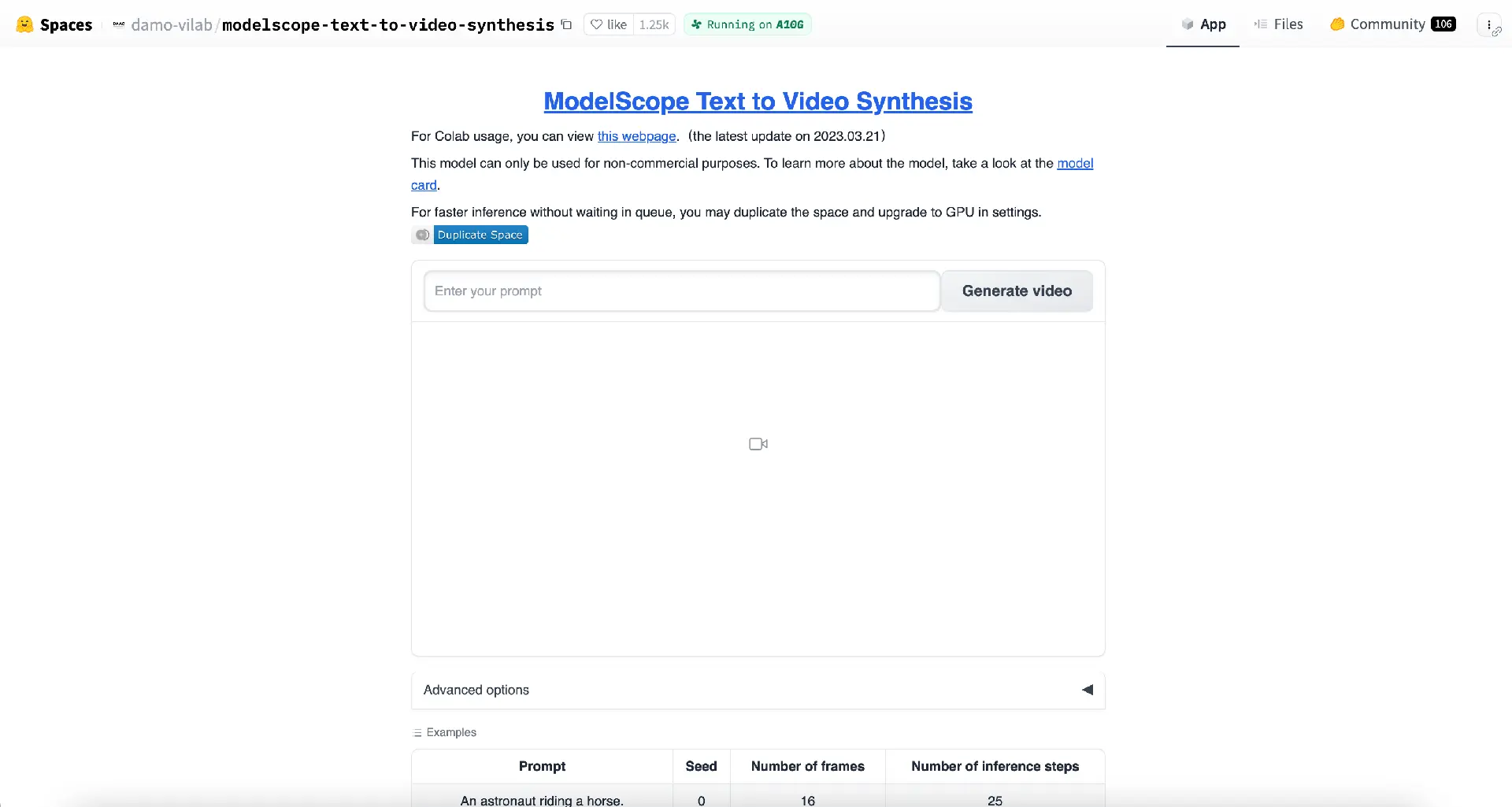

About ModelScope Text to Video

ModelScope, founded in 2022 in China, presents the innovative "Text to Video Synthesis" tool. Based on the Hugging Face platform, this state-of-the-art machine-learning application can convert textual content into compelling video formats. Users can harness this tool to generate many video types, from animated text to short-form videos, all by simply providing a text description.

Key Features

Simple Usage

Even those unfamiliar with machine learning can easily navigate and utilize the tool, as it is designed for user-friendly operation.

Linked Models and Files

To guarantee top-tier video outputs, the tool incorporates linked models and files, ensuring text conversion into visually engaging content.

Advanced Settings

Users have the flexibility to customize their outputs through several advanced settings:

Seed Configuration: Allows users to set a value between -1 to 100,000, with -1 implying a different seed with each use.

Frame Selection: Users can select between 16 to 32 frames. The video's content adjusts based on the chosen number of frames.

Inference Steps: The number of inference steps can range between 10 and 50.

Technical Overview

The synthesis relies on a multi-stage text-to-video generation diffusion model. This model has three integral sub-networks: text feature extraction, text feature-to-video latent space diffusion model, and video latent space-to-video visual space. With a staggering 1.7 billion parameters, the model only supports English input. The diffusion model employs the Unet3D structure, generating videos through an iterative denoising process starting from pure Gaussian noise video.

Applications

ModelScope's tool stands out for its versatility. Here are a few examples of videos it can generate:

A robot dancing in Times Square

A clownfish navigating through a coral reef

Ice cream melting and dripping down its cone

A surreal depiction of a cat eating food in the style of van Gogh

A hyper-realistic image of a stormy, abandoned industrial site